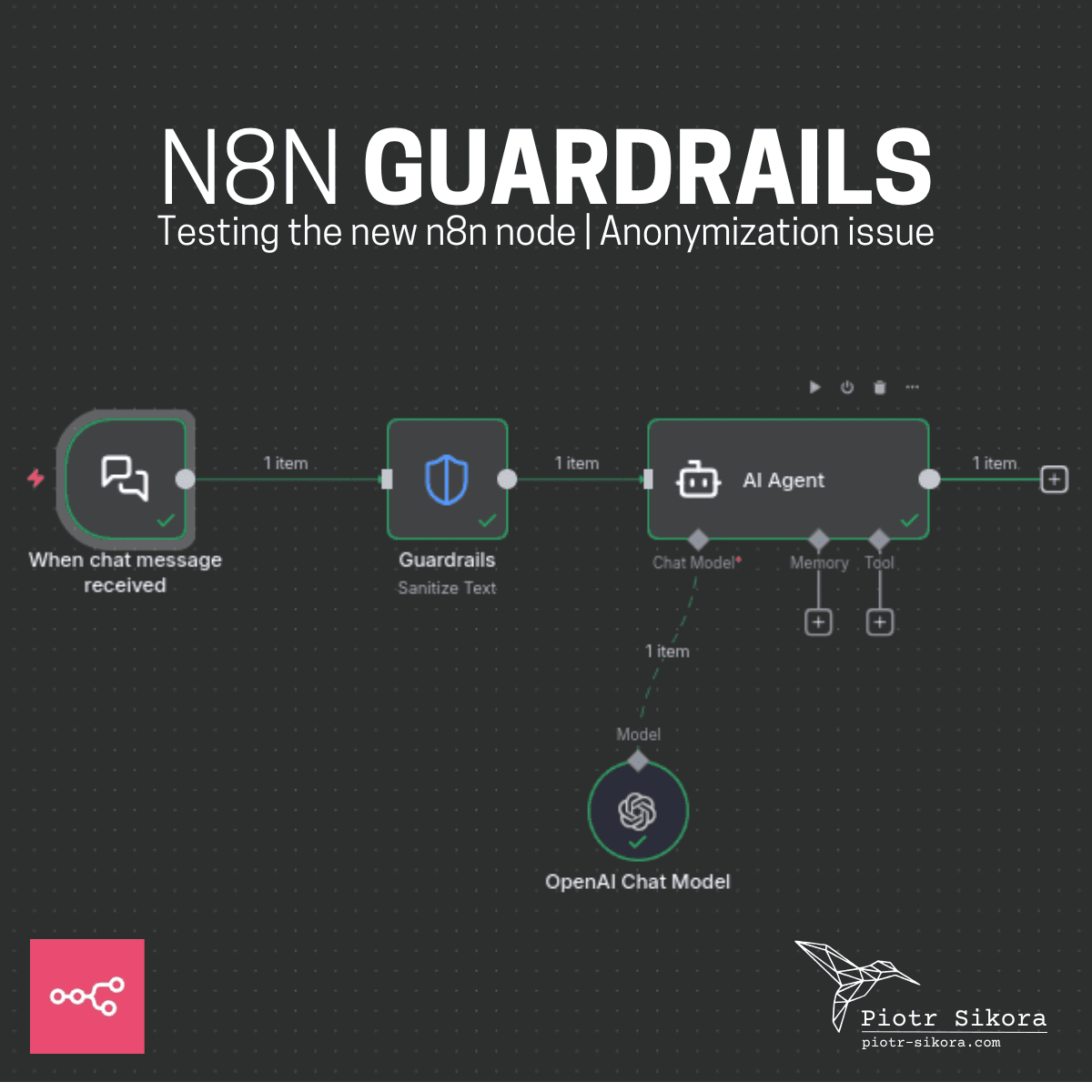

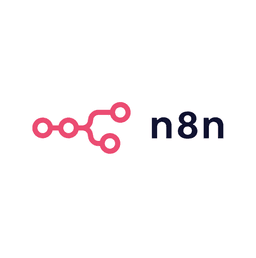

I’ve started testing the new Guardrails feature in n8n

When the Guardrails node was announced on October 30th, 2025 during the "n8n Livestream: AI Guardrails, Pinecone & Community Highlights", I was genuinely excited. This is exactly the kind of feature many AI-automation builders were waiting for.

I ran several tests - and yes, it works really well. But... there's one area where it could be significantly better.

Here's the challenge:

Let's say you're working with anonymized data and want to pass it safely into an AI Agent.

Example:

"I'm going to work with two emails: adam@adam.com and kate@kate.com"

After passing this through n8n Guardrails node, you get:

"I'm going to work with two emails <EMAIL_ADDRESS> and <EMAIL_ADDRESS>"

Perfect. For standard anonymization — that’s exactly what you want.

But what if you need to restore the data afterwards?

Imagine you’re generating a draft of a contract

"The contractor identifies themselves with the email adam@adam.com and the client with kate@kate.com"

Emails repeat multiple times throughout the contract. You anonymize -> process with AI -> and then want to map them back.

In an ideal scenario you’d get something like:

- <EMAIL_ADDRESS_1> - adam@adam.com

- <EMAIL_ADDRESS_2> - kate@kate.com

So you can safely restore the original content after the AI analysis.

But with the current Guardrails implementation?

Every email becomes the same token: <EMAIL_ADDRESS>

- No index.

- No unique ID.

- No linkage.

Meaning: you can't restore anything.

And it's the same story with:

- bank account numbers

- phone numbers

Basically any data you want anonymized and then brought back.

n8n guardrails anonymization issue possible solutions

Have any of you tried solving this in n8n?

- Maybe using a preprocessing node?

- Local AI model?

- A custom replacer?

- Regex + lookup table?

- Or external anonymization before Guardrails?

Curious if someone has already experimented with this pattern?

Test this workflow in your n8n instance

Copy code below and test it in your n8n instance. Guardrails node is great and saves a lot of time!

You can find the workflow here: guardrails-testing.json

Or just copy below JSON:

{

"nodes": [

{

"parameters": {

"options": {}

},

"type": "@n8n/n8n-nodes-langchain.chatTrigger",

"typeVersion": 1.4,

"position": [

0,

0

],

"id": "5079125a-2e47-47ae-b62c-b1f3c7c848a4",

"name": "When chat message received",

"webhookId": "c2723852-3b0b-4745-98e9-d31b1e26f8c6"

},

{

"parameters": {

"operation": "sanitize",

"text": "={{ $json.chatInput }}",

"guardrails": {

"pii": {

"value": {

"type": "all"

}

}

}

},

"type": "@n8n/n8n-nodes-langchain.guardrails",

"typeVersion": 1,

"position": [

208,

0

],

"id": "61b4fc9a-c92f-4374-9e36-a948c3d5f981",

"name": "Guardrails"

},

{

"parameters": {

"promptType": "guardrails",

"options": {}

},

"type": "@n8n/n8n-nodes-langchain.agent",

"typeVersion": 3,

"position": [

416,

0

],

"id": "0b88947e-5461-4b46-bec9-24c3bf12e2ec",

"name": "AI Agent"

},

{

"parameters": {

"model": {

"__rl": true,

"mode": "list",

"value": "gpt-4.1-mini"

},

"builtInTools": {},

"options": {}

},

"type": "@n8n/n8n-nodes-langchain.lmChatOpenAi",

"typeVersion": 1.3,

"position": [

368,

256

],

"id": "42b42139-8ede-48d8-872f-8efc01aa4b6d",

"name": "OpenAI Chat Model",

"credentials": {

"openAiApi": {

"id": "7lmHcPMAjhsZFa1f",

"name": "OpenAi account"

}

}

}

],

"connections": {

"When chat message received": {

"main": [

[

{

"node": "Guardrails",

"type": "main",

"index": 0

}

]

]

},

"Guardrails": {

"main": [

[

{

"node": "AI Agent",

"type": "main",

"index": 0

}

]

]

},

"OpenAI Chat Model": {

"ai_languageModel": [

[

{

"node": "AI Agent",

"type": "ai_languageModel",

"index": 0

}

]

]

}

},

"pinData": {},

"meta": {

"templateCredsSetupCompleted": true,

"instanceId": "2295c029f4cb86c8f849f9c87dade323734dc279619eb9e2704f8473c381e4d1"

}

}

Comments